From Random Guesses to Revenue Drivers

Six figures in incremental revenue and tens of millions more in donations raised — built from zero baseline, under tight enterprise constraints.

Starting Without a Baseline

Conversion Rate Optimization · Evidence-based Design · Cross-functional Collaboration

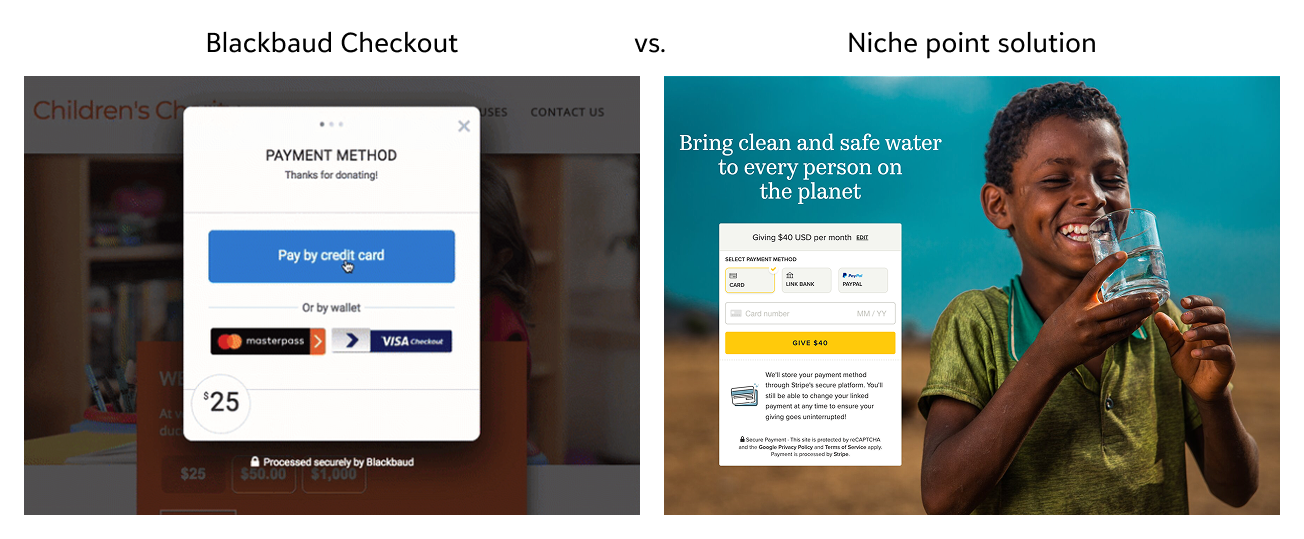

We faced increasing competitive pressure from point solutions offering modern checkout experiences. Our mandate was to optimize donation conversions — but we were starting from scratch with no A/B testing culture, no baseline data on donor behavior, and no shared understanding of what "better" even meant.

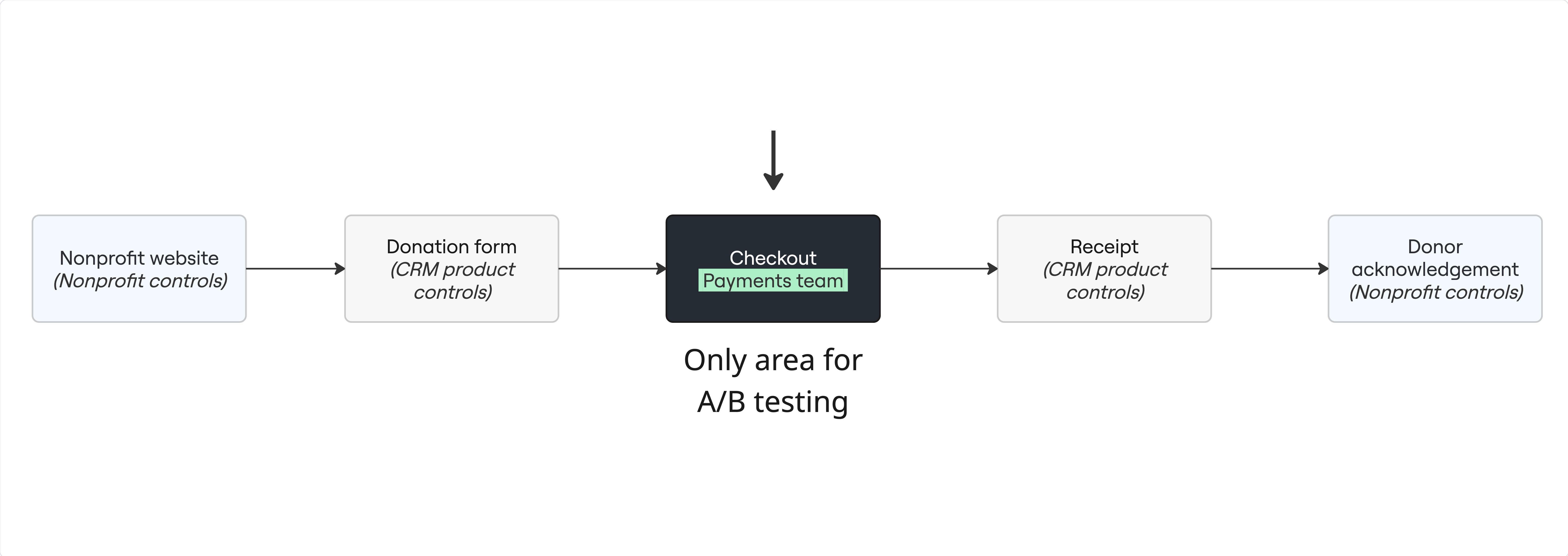

The structural challenge was just as significant: the payments team owned only the "middle" of the donation flow. We had zero control over how donors arrived or the donation form that launched our payment service. We had to deliver outsized impact inside a narrow lane.

A Miro-based visualization of the donation flow — teams owned different segments, limiting our leverage to the payment screens.

Moving from Gut Feelings to a Disciplined Framework

Enterprise projects slow down when communication gets unclear. We faced pushback from teams lacking resources to prioritize our experiments — partly because of an assumption that another team was already working on a future payments flow, years out.

I facilitated workshops using an Impact vs. Effort matrix to prioritize the most valuable tests within rigid payment release schedules without overloading other product teams. This framework became the foundation for every test we ran — and the model other teams later adopted.

The Research "Aha" That Proved Why Guessing Fails

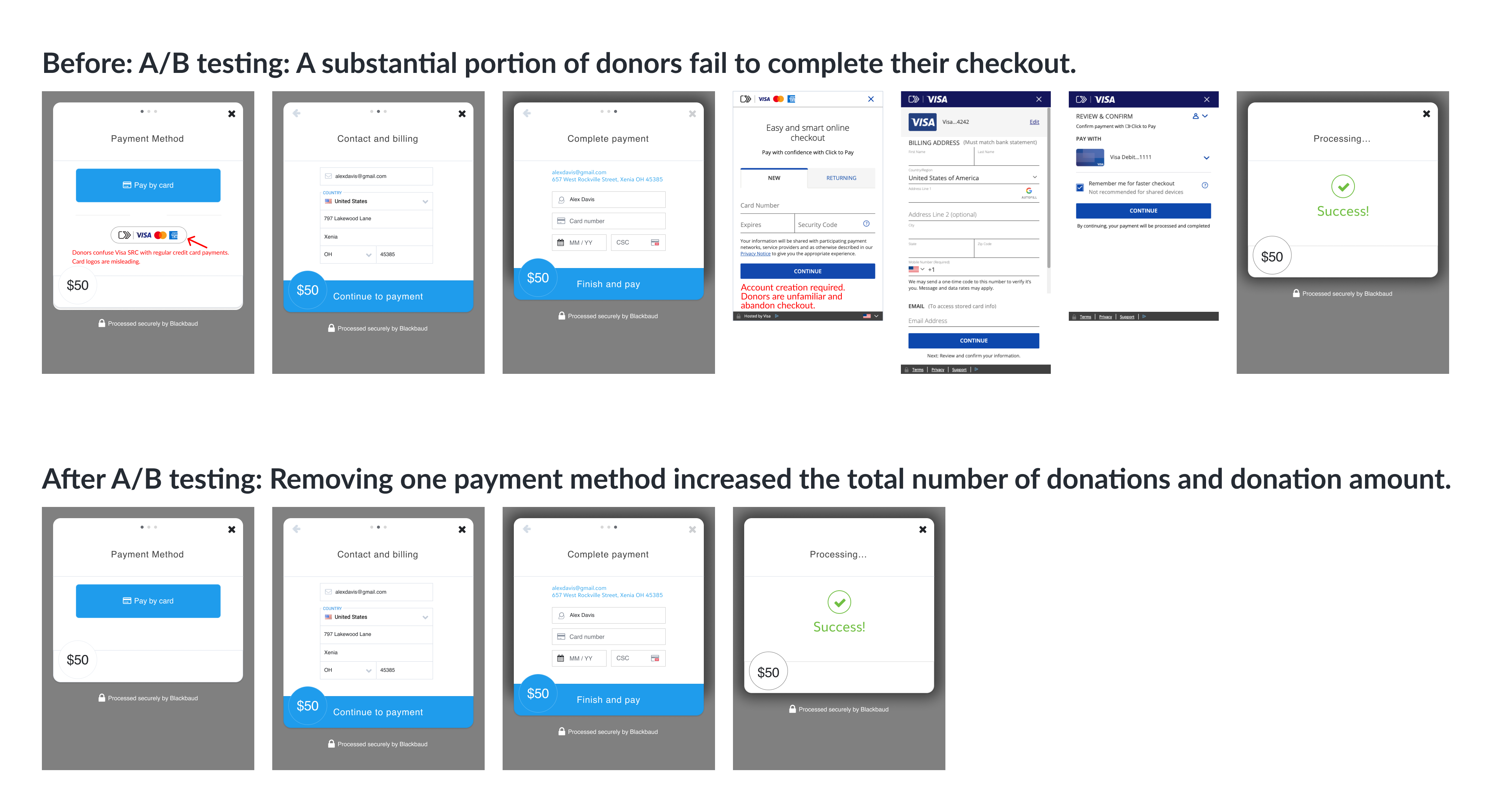

I conducted a cognitive walkthrough and analysed donor feedback to uncover a critical pain point: donors were confusing the Visa SRC digital wallet logo with the standard credit card button. When donors clicked Visa SRC by mistake, they encountered mandatory account creation and nine additional screens — causing significant drop-off.

The result: a 1.64% lift in donation completions — translating to a six-figure increase in additional revenue over three months and an eight-figure increase in donations for our customers. More importantly, it validated the model: user research pinpoints where to test; A/B testing proves the impact.

When Business Pressure Meets Design Ethics

Stakeholder Alignment · Design Leadership · Regulatory Risk Management

During testing, there was intense internal pressure to experiment with a "Complete Cover" fee model — asking donors to leave a voluntary "tip" to Blackbaud via a pre-checked box with low visual prominence.

I led the defence. Two usability tests demonstrated that donors found the pattern deceptive and that it lowered overall donation amounts. I brought the findings to Legal and Compliance, framing the issue as regulatory risk — not just UX preference.

Bringing up legal risk was what moved leadership. The data showed it was bad for users. The compliance framing showed it was bad for the business. Both had to be true to win the argument.

— Honest reflection on what actually shifted the decision

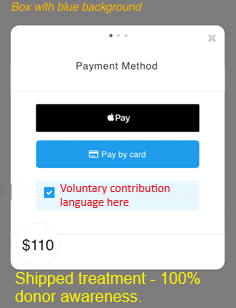

Low-visibility pre-selected checkbox. Blackbaud Checkout.

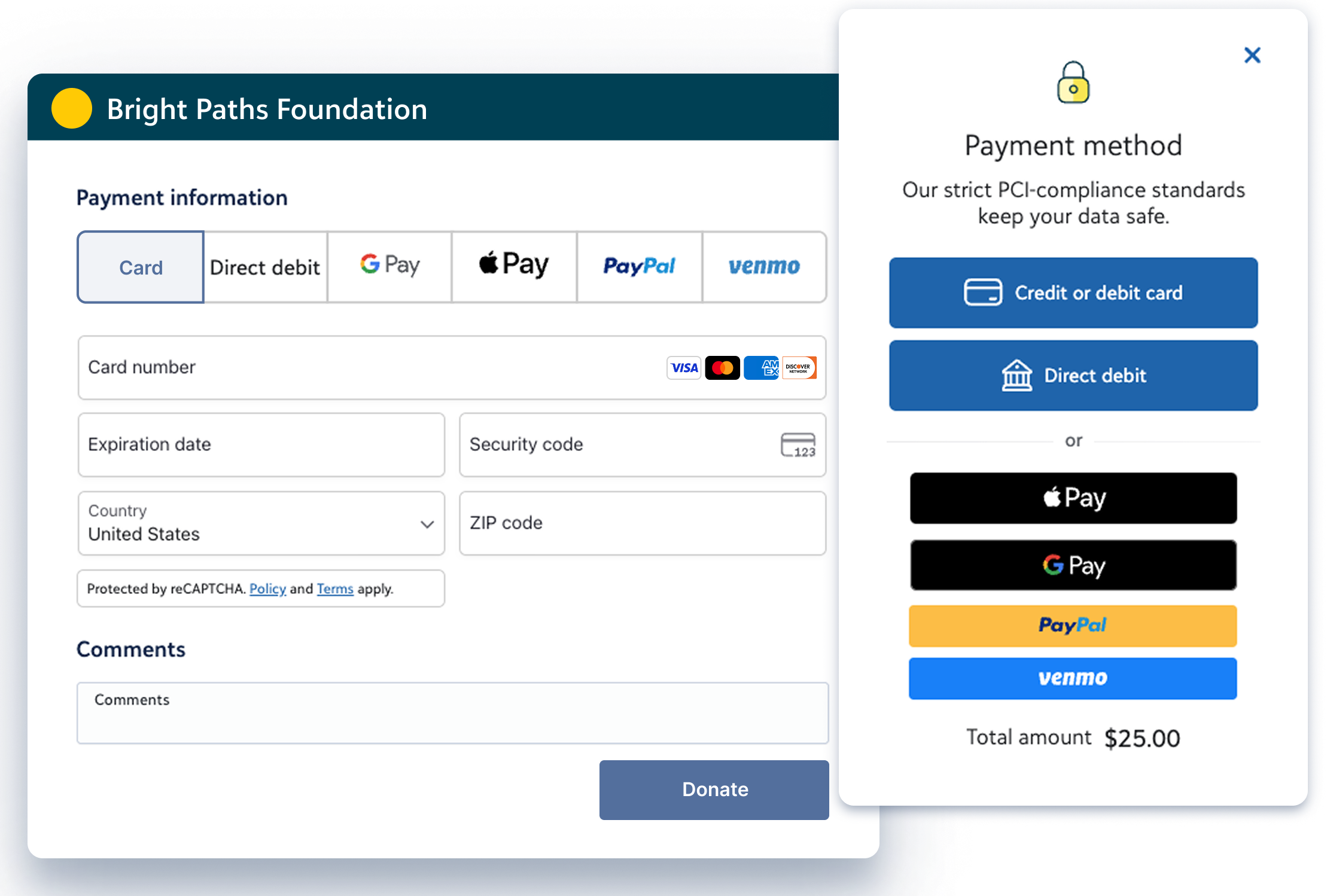

High-contrast treatment raising donor awareness. Blackbaud Checkout.

Two usability sessions and a compliance review replaced a dark pattern with a transparent, consent-first design.

Research Exhibit — Donor awareness of the checkbox

| Condition | Donors noticing the ask | Donors not noticing |

|---|---|---|

| Without blue-box visual | 66.7% | 33.3% |

| With blue-box visual | 100% | 0% |

Finding: The visual treatment was not a cosmetic change — it was a legal and ethical necessity backed by measured user behaviour data.

From Shots in the Dark to a Strategic A/B Framework

Product Strategy · Revenue Growth · Cross-functional Leadership · Scalability

This project did more than raise revenue — it changed how the business approaches A/B testing entirely. Rather than picking random elements to test, product teams now use user research to identify where friction is highest, then test targeted interventions.

I co-authored two new KPIs that permanently redefined how "winning" tests are determined:

- Revenue per Visitor — replacing simple conversion rate as the primary success signal

- Donor Sentiment — measuring the emotional experience of checkout, not just the transaction

We stopped guessing what to test. User research became our guide.

Honest Hindsight

I realised how much time I could have saved by involving Legal and Compliance at the beginning of discovery — not the end. Bringing up regulatory risk was ultimately what shifted leadership's position on the dark pattern. Had we partnered earlier, we could have cut weeks of stakeholder friction and used that time to run more tests. The lesson: in highly regulated enterprise environments, your most valuable design partners may sit outside the product org.